AI on your Mac, in your pocket, and under pressure: the latest developer tool shifts

A quick roundup of new AI tool moves: hybrid local/cloud assistants on Mac, Codex heading to phones, Claude Code usage debates, and the mounting pressure around safety and security.

Several recent AI stories point to the same broad trend: AI tools are becoming more embedded in everyday workflows, while questions around limits, reliability, and security keep getting sharper.

From a Mac app that blends local and cloud models to coding tools expanding to phones, the latest updates show vendors pushing for more flexibility and reach. At the same time, reporting on usage controls, healthcare note-taking errors, and a recent OpenAI security incident highlights how operational trust remains central.

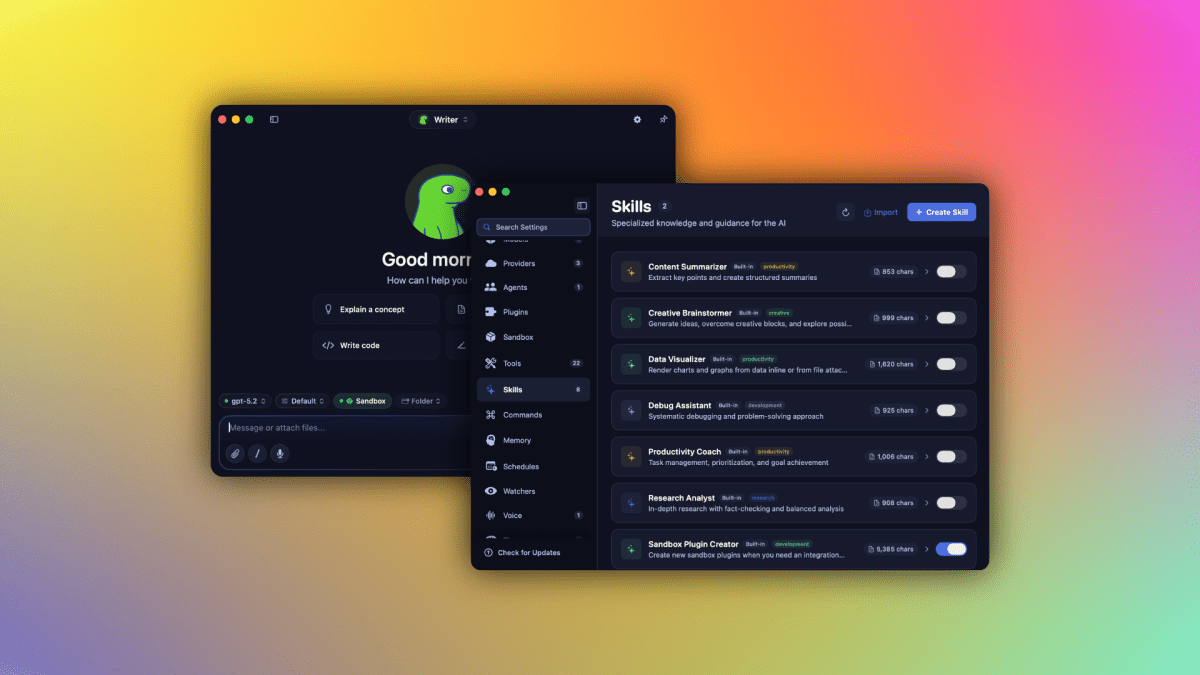

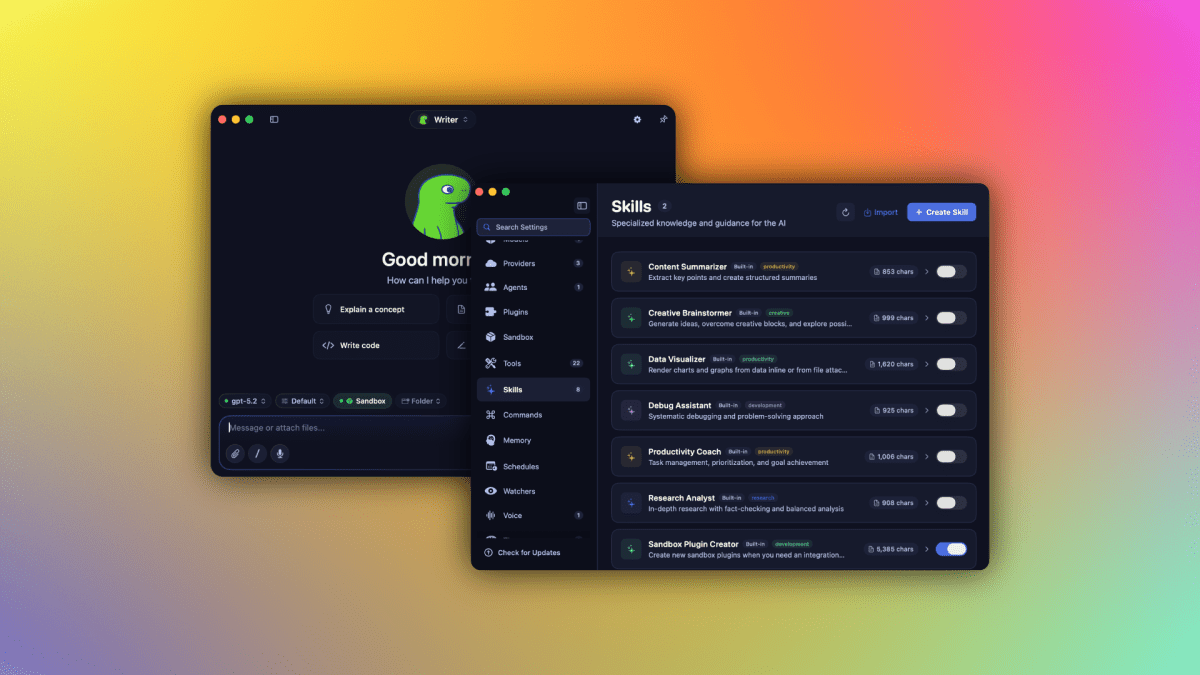

Hybrid AI on the desktop

TechCrunch reports that Osaurus combines local and cloud AI models in a Mac app while keeping users’ memory, files, and tools on their own hardware. Even from that short description, the positioning is clear: users get a mix of on-device and cloud capability without giving up direct control over their working context.

That matters because desktop AI products are increasingly competing on where data lives and how tightly they integrate with the user’s own environment.

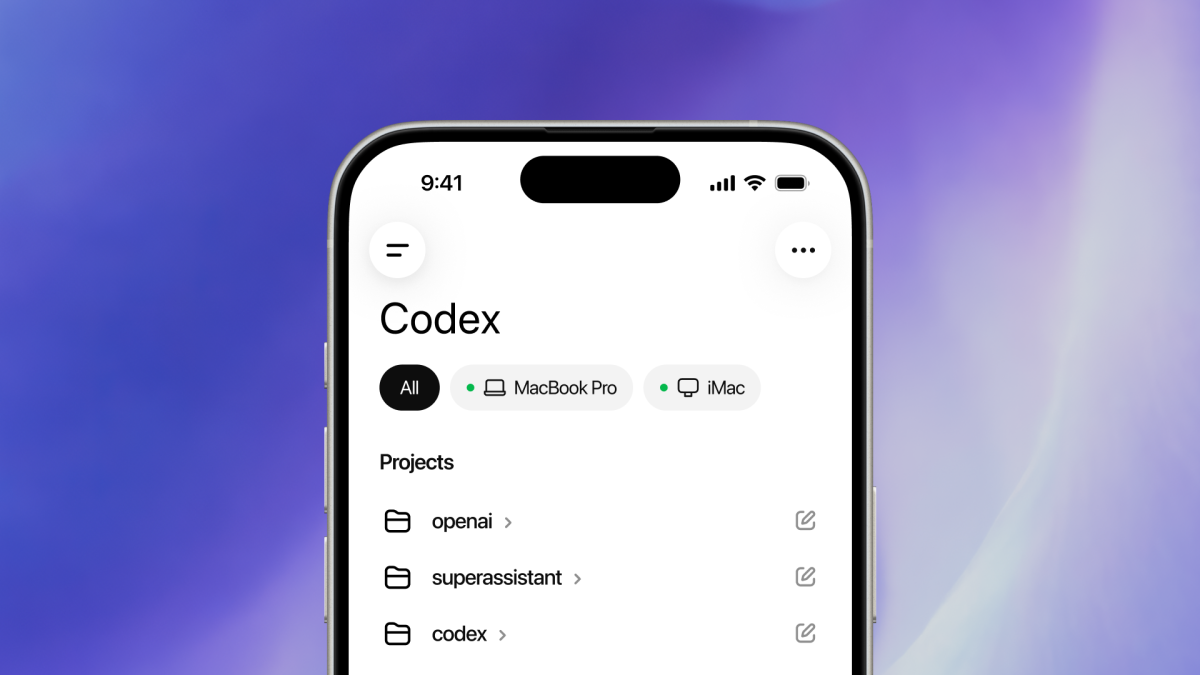

AI coding expands beyond the desktop

OpenAI says Codex is coming to phones, according to TechCrunch. The stated benefit is enhanced flexibility over how users can manage their workflows. In practical terms, that signals an effort to make coding-related AI assistance more available wherever users are, not just at a desk.

Another developer-focused tool, Clawdmeter, takes a narrower but useful angle. TechCrunch describes it as an open source gadget that turns Claude Code usage stats into a tiny desktop dashboard for AI coding power users. Together, these updates suggest that AI coding products are maturing not only through core model capability, but also through surrounding workflow and monitoring tools.

Usage limits and transparency stay in focus

Ars Technica's interview with Anthropic product lead Cat Wu frames Claude Code's development philosophy as intentionally restrained. The article centers on usage limits, transparency, and what Wu calls a “lean harness,” underscoring that product design here is not about some sweeping master plan, but a more deliberate approach.

“We have no grand plan,” says Anthropic's Cat Wu—but that's by design.

That perspective fits the broader moment. As AI tools become more central to software work, users want clearer expectations around access, limits, and how products behave under load.

Ambition versus reliability

At the far end of AI ambition, TechCrunch reports on Richard Socher's new startup, which wants to build an AI that can research and improve itself indefinitely while still shipping products. The premise points to a familiar tension in the industry: bold claims about autonomous improvement need to be matched by practical product execution.

That tension becomes even more important when AI systems are used in higher-stakes settings.

When mistakes carry real-world consequences

Ars Technica reports that an Ontario audit found AI medical notetakers may be introducing fabricated or incorrect details, including made-up therapy referrals and incorrect prescriptions. This is a sharp reminder that convenience and automation can introduce serious risk when deployed in clinical environments.

Developer tools and productivity assistants may tolerate occasional rough edges differently than healthcare systems can. The contrast helps explain why transparency, oversight, and product guardrails remain so important across the AI stack.

Security and partnership strain add more pressure

TechCrunch also reports that OpenAI said hackers stole some data after a recent code security issue. The company said the damage was limited to employees’ devices, did not affect user data or production systems, and did not involve theft of intellectual property.

Separately, TechCrunch says OpenAI is reportedly exploring legal action against Apple over a ChatGPT integration that allegedly failed to deliver the prominence and subscriber gains OpenAI expected. Whether viewed as a partnership dispute or a platform-distribution conflict, it reflects another reality of the current AI market: product success depends not only on models, but also on ecosystem leverage and execution.

The bigger picture

- AI products are moving closer to users’ daily environments, including desktops and phones.

- Workflow flexibility and visibility are becoming product differentiators.

- Trust issues remain unresolved, especially around safety, transparency, and reliability.

- Security incidents and partner tensions can shape perception as much as technical progress.

The net result is an AI landscape that feels simultaneously more useful and more scrutinized. The newest tools promise better integration and convenience, but every expansion in reach seems to bring a matching increase in expectations.

References & Credits

- Osaurus brings both local and cloud AI models to your Mac — TechCrunch

- OpenAI says Codex is coming to your phone — TechCrunch

- Claude Code's product lead talks usage limits, transparency, and the "lean harness" — Ars Technica

- Clawdmeter turns your Claude Code usage stats into a tiny desktop dashboard — TechCrunch

- What happens when AI starts building itself? — TechCrunch

- Your doctor’s AI notetaker may be making things up, Ontario audit finds — Ars Technica

- OpenAI says hackers stole some data after latest code security issue — TechCrunch

- OpenAI is reportedly preparing legal action against Apple; it wouldn’t be the first partner to feel burned — TechCrunch