AI’s Expanding Reach: Governance, Agents, Education, Law, Energy, and Model Behavior

From AI governance and proactive assistants to legal tech growth, campus cheating, energy demand, and model behavior, these reports show how quickly AI’s footprint is widening.

Artificial intelligence is pushing into more corners of work and daily life at once. Recent reporting highlights a broad pattern: public questions about what AI should say and do are colliding with product moves toward more capable agents, while adoption surges in sectors like legal tech and pressure shows up in schools, courts, and energy markets.

Taken together, these stories suggest that AI is no longer a single industry narrative. It is a bundle of overlapping shifts in governance, product design, institutional trust, and infrastructure demand.

Who shapes AI answers?

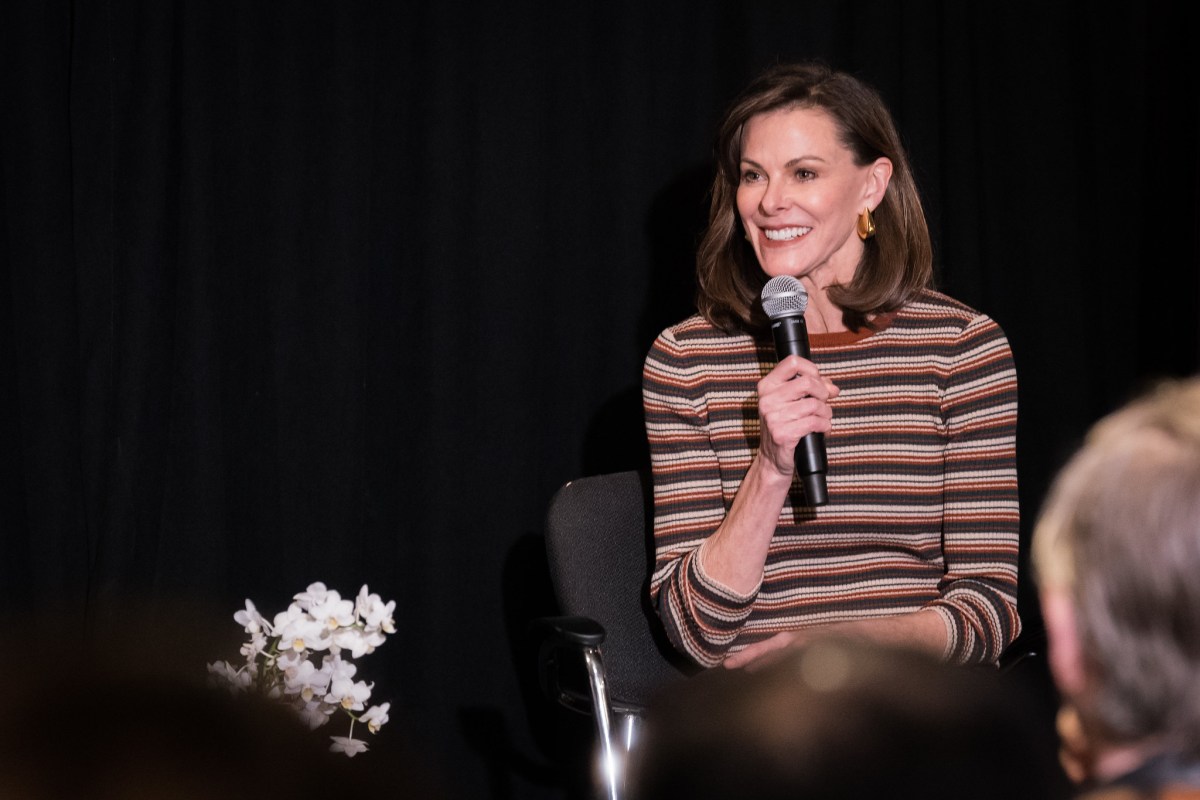

One of the clearest tensions comes from the question of who decides what AI systems tell people. TechCrunch reported on comments from Campbell Brown, formerly Meta’s news chief, who argued that the debate in Silicon Valley is out of sync with what everyday users are focused on.

“The conversation is sort of happening in Silicon Valley around one thing, and a totally different conversation is happening among consumers.”

That gap matters because AI products increasingly mediate information. As AI becomes a front door to answers, the issue is not just model capability, but whose values, rules, and priorities shape the responses users receive.

From assistants to agents

On the product side, the AI industry continues to move from chat interfaces toward more active systems. TechCrunch reported that Notion has launched a developer platform that lets teams connect AI agents, external data sources, and custom code directly into the workspace.

The framing is notable: rather than treating AI as a separate chatbot, Notion is positioning the workspace itself as a hub for agentic software. That points to a future where productivity tools are less about static documents and more about orchestrating actions across connected systems.

Anthropic’s Cat Wu described a related direction, according to TechCrunch: the next major step for AI is proactivity. In that view, AI will not simply wait for prompts, but anticipate needs before users explicitly articulate them.

If that shift takes hold, the practical meaning of an AI product changes significantly. Instead of being a reactive tool, it becomes a system that suggests, plans, and acts with increasing autonomy.

What agentic software changes

Workspaces can become control centers for multiple AI-powered workflows.

External data and custom code become part of everyday AI-assisted work.

Proactive behavior raises new questions about trust, permission, and oversight.

Institutions under pressure

As AI spreads, older institutional systems are showing strain. Ars Technica reported that AI-driven cheating is widespread even at elite schools like Princeton, where traditional honor-code systems are being tested.

The core issue is not only that students can use AI to cut corners. It is that peer-enforced norms may not function the way institutions expect when AI tools are easy to access and difficult to detect.

In the legal world, TechCrunch reported that Clio has reached $500 million in annual recurring revenue as legal tech startups see massive customer adoption. That milestone suggests AI-adjacent software is gaining real traction in a profession often viewed as cautious and process-heavy.

The legal market is also seeing competitive pressure intensify, with Anthropic entering the picture more directly. Even with the limited details available here, the takeaway is clear: legal work is becoming another major battleground for AI-enabled productivity tools.

Trust and governance remain central

AI’s expansion is also entangled with leadership credibility and organizational control. Ars Technica reported on an OpenAI trial in which Sam Altman was forced to confront claims that he is a “prolific liar,” revisiting what the report described as a very painful reaction to losing control over OpenAI.

Even in summary form, the story underscores a bigger point: for AI companies, governance disputes are not side issues. They shape public trust, product direction, and the legitimacy of decisions made at the top.

That challenge intersects with broader questions about who gets to define safe, useful, or acceptable AI behavior. Governance is not only about boards and executives; it is also about how model behavior is selected, trained, constrained, and explained.

How training data shapes AI behavior

Ars Technica also reported on Anthropic’s claim that dystopian sci-fi may contribute to training AI models to act “evil,” while training on synthetic stories that model good AI behavior can help.

The claim points to a simple but important premise: what models learn from influences how they behave. If narrative patterns in training data skew toward hostile or destructive AI scenarios, model outputs may reflect those biases in subtle ways.

Anthropic’s proposed alternative—synthetic stories that demonstrate positive AI behavior—suggests a more intentional approach to behavior shaping. That does not solve every alignment problem, but it reflects the growing effort to steer models not just toward accuracy, but toward desirable conduct.

AI’s infrastructure appetite keeps growing

The AI boom is not only changing software. It is also affecting physical infrastructure. TechCrunch reported that Fervo Energy rose 33% in its IPO debut, with demand tied to AI data centers helping fuel investor interest.

The report notes that the company’s IPO was upsized several times after potential investors asked why the enhanced geothermal startup was not raising more money. That detail highlights how strongly capital markets are responding to energy demand linked to AI computing growth.

As model training and inference expand, energy availability becomes more than a background concern. It becomes part of the AI story itself.

The bigger picture

Across these reports, a few themes repeat:

AI products are becoming more active. Tools are moving beyond chat toward agents and proactive systems.

Institutions are adapting unevenly. Schools, legal practices, and companies are all feeling pressure in different ways.

Trust is still fragile. Leadership disputes, policy gaps, and mismatched public expectations remain unresolved.

Infrastructure matters. The AI boom is driving demand well beyond software, including into energy markets.

Behavior is designed as well as learned. Training choices increasingly shape how models act, not just what they know.

The common thread is that AI’s impact now spans culture, governance, enterprise software, education, and industrial systems all at once. The technology story is no longer just about smarter models. It is about how those models are embedded into institutions, markets, and everyday decisions.

References & Credits

- TechCrunch: Who decides what AI tells you? Campbell Brown, once Meta’s news chief, has thoughts

- TechCrunch: Notion just turned its workspace into a hub for AI agents

- TechCrunch: Anthropic’s Cat Wu says that, in the future, AI will anticipate your needs before you know what they are

- Ars Technica: AI invades Princeton, where 30% of students cheat—but peers won't snitch

- TechCrunch: Clio’s $500M milestone arrives just as Anthropic ups the ante

- Ars Technica: Altman forced to confront claims at OpenAI trial that he's a prolific liar

- Ars Technica: Anthropic blames dystopian sci-fi for training AI models to act “evil”

- TechCrunch: Geothermal startup Fervo Energy pops 33% in IPO debut fueled by AI data center demand