AI and data platforms accelerate: Amazon Quick, Firestore, and enterprise momentum

Amazon Quick adds conversational analytics and S3 table bucket access, Firestore pushes deeper into agentic app development, and enterprise AI investment keeps heating up.

The latest product updates point to a common theme: AI is becoming more tightly coupled with the data and application layers that enterprises already rely on. From conversational analytics in Amazon Quick to new Firestore capabilities for agentic apps, vendors are pushing to make data more directly usable by both humans and AI systems.

Amazon Quick expands conversational analytics

AWS announced that Amazon Quick now supports Dataset Q&A, a conversational analytics capability that lets users ask natural-language questions directly against enterprise data.

According to AWS, Dataset Q&A complements Dashboard Q&A and allows anyone with dataset access to explore data and get actionable insights in natural language, while still respecting governance controls such as row-level and column-level security policies defined by data owners.

AWS says the feature is powered by Amazon Quick’s text-to-SQL agent, which interprets a user’s question, identifies the right data, and generates SQL in a single conversational step.

Dataset Q&A adds a new way to interact with enterprise data using natural language while preserving existing governance rules.

S3 table buckets become a new data source

In a related update, AWS also said Amazon Quick now supports Amazon S3 table buckets as a data source. That enables users to build dashboards, run conversational analytics, and explore Apache Iceberg tables stored in S3 table buckets.

The company says this removes the need for an intermediate data warehouse or OLAP layer in these scenarios, allowing teams to work with lakehouse data in Amazon Quick for both agentic AI and BI workloads through a simpler architecture.

- Support for dashboards and conversational analytics on S3 table bucket data

- Exploration of Apache Iceberg tables

- Compatibility with Zero-ETL inputs from sources such as Salesforce, SAP, and Amazon Kinesis Data Firehose

- Near real-time insights with fewer pipeline dependencies

AWS also notes that admins configure S3 table bucket permissions once, after which authors can create datasets and start building immediately.

Firestore targets the agentic app stack

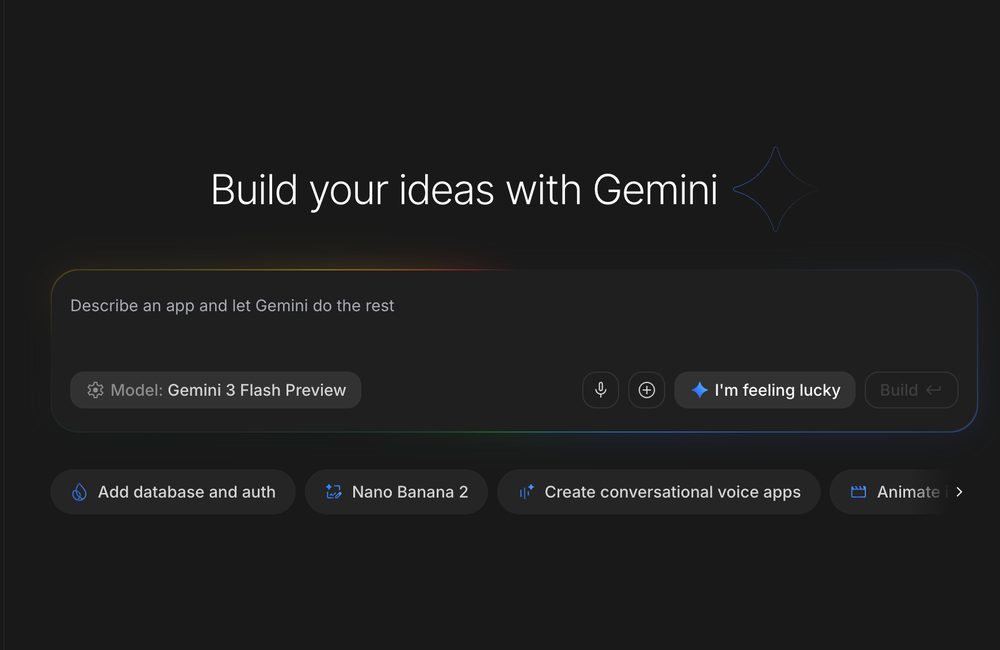

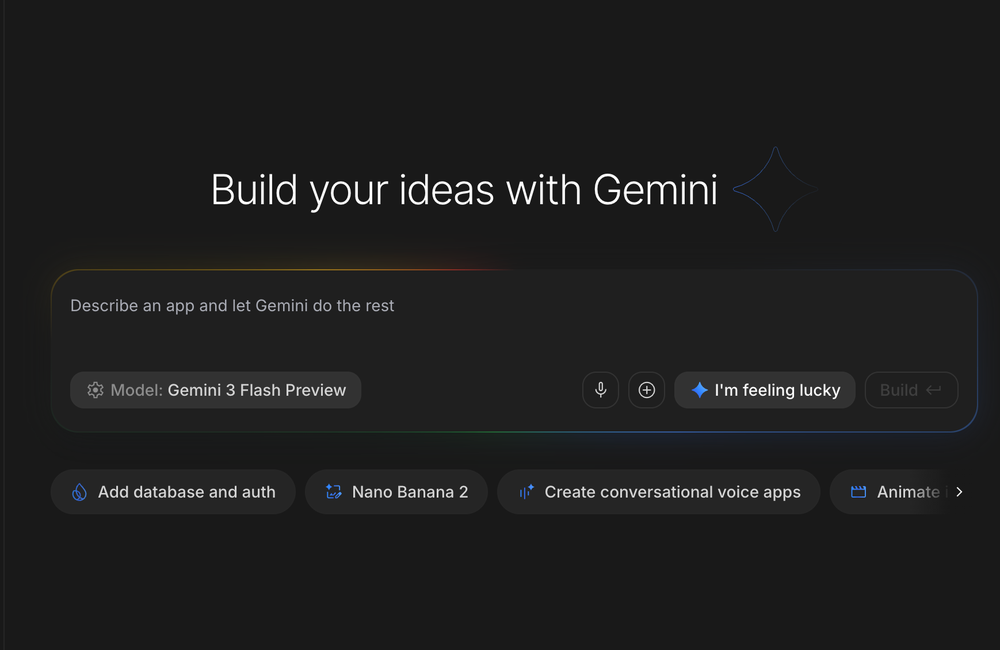

Google Cloud is making a similar argument from the database layer. At Google Cloud Next ’26, the company positioned Firestore as infrastructure for emerging agentic applications, highlighting its scalability and high availability as a fit for AI-driven development.

The update centers on three areas called out by Google Cloud:

- Tighter agentic AI integrations, including new native integrations with AI Studio and third-party coding agents

- Full-text search and expressive queries, intended to help both agents and users retrieve and work with information more effectively

- MongoDB compatibility

The broader message is that databases are being adapted not just for traditional app backends, but for workflows where LLMs and software agents are increasingly active participants.

Enterprise AI competition keeps intensifying

The business backdrop is just as significant as the product news. TechCrunch reported that Sierra raised $950 million, giving the company more than $1 billion to work with. Sierra says it plans to use that capital to become the global standard for AI-powered customer experiences.

Even from the limited details available in the source report, the size of the raise underscores how aggressively companies and investors are pursuing the enterprise AI market.

What these updates suggest

Taken together, these announcements show a clear convergence:

- Analytics tools are becoming conversational

- Data platforms are being simplified for AI and BI access

- Databases are evolving to serve agentic development patterns

- Capital markets continue to reward companies trying to define enterprise AI experiences

What stands out most is how much emphasis vendors are putting on reducing friction between data, queries, and AI interfaces. Whether through natural-language analytics in Amazon Quick or database features designed for coding agents and LLM-connected apps in Firestore, the common goal is directness: fewer layers, faster answers, and systems that can serve both people and AI agents.

References & Credits

- AWS: Amazon Quick introduces Dataset Q&A for conversational analytics against enterprise data

- AWS: Amazon Quick now supports S3 tables bucket as a data source

- Google Cloud: Firestore at Next '26: Unlock agentic development, search and MongoDB compatibility

- TechCrunch: Sierra raises $950M as the race to own enterprise AI gets serious