Enterprise AI in Practice: Customization, Agent Readiness and the New Cost of Operations

Enterprise AI is shifting from model selection to operational readiness: customization, secure agent runtimes, API governance, AgentOps, token economics and developer discipline.

Enterprise AI conversations are moving beyond simply choosing a model or vendor. Across recent reporting, a clearer picture is emerging: organizations now have to think about customization, operational controls, API readiness, cost management and the human skills needed to keep AI-generated systems reliable.

Why enterprise AI cannot be treated like traditional enterprise software

One theme stands out immediately: many organizations are approaching AI adoption the way they once bought enterprise software — by picking a vendor and standardizing on a single approach. The New Stack argues that enterprise AI needs customization instead.

Even from the limited excerpt available, the point is clear: AI adoption does not fit neatly into the old software procurement model. Enterprise needs vary, and organizations should expect adaptation rather than one-size-fits-all deployment.

Most organizations are approaching AI adoption the same way they once bought enterprise software.

AI agents are forcing infrastructure and governance questions

Several of the source articles point to the same operational reality: AI agents are changing what enterprise platforms must support.

Secure runtimes for agentic systems

In coverage of OpenShell, The New Stack notes that the software stack behind enterprise applications was built for humans, with assumptions around human-speed interaction, human-managed credentials and human oversight. That framing suggests a mismatch between existing enterprise systems and the needs of AI agents.

If agentic systems operate differently from human users, then runtime security, credential handling and control planes become central enterprise concerns rather than edge cases.

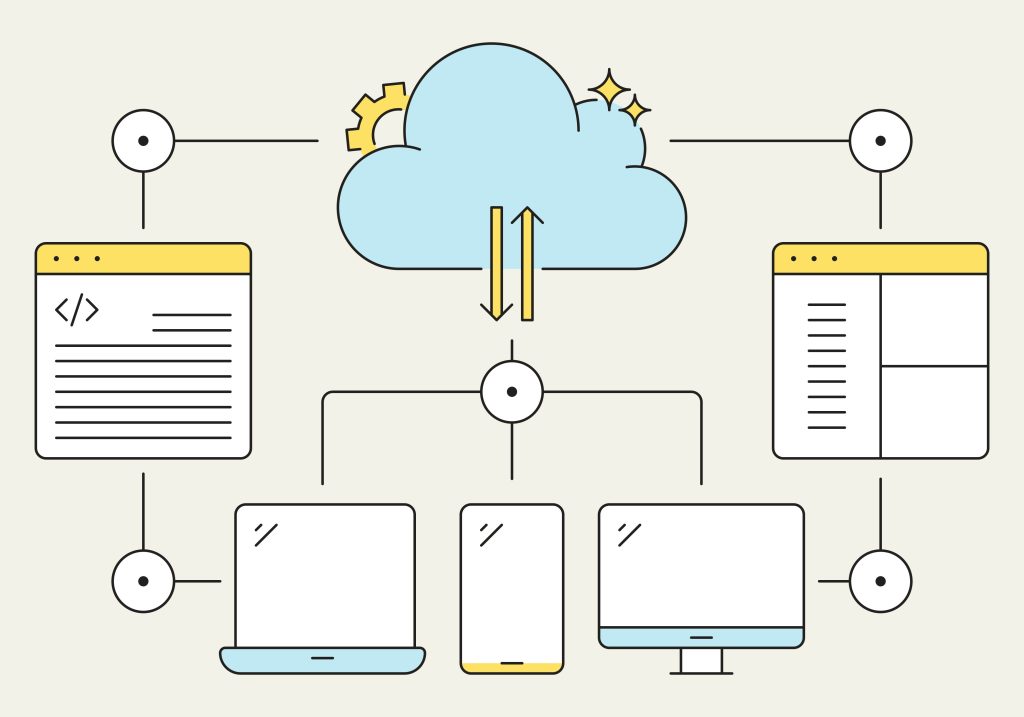

API portals as a readiness signal

The same readiness question appears in API governance. The article on API portals argues that the state of a company’s API portal is a strong signal of whether it can handle AI agents. The reported conversation with Kin Lane emphasizes parallels between API maturity and AI-agent readiness.

Taken together, these articles suggest that enterprises cannot bolt agents onto weak interfaces or poorly governed APIs. Agent adoption depends on the operational shape of the underlying platform.

- APIs need to be discoverable and usable.

- Governance needs to support automated consumers, not just human developers.

- Security assumptions built for people may not hold for software agents.

From experimentation to production: the rise of AgentOps

Red Hat’s updates to Red Hat AI 3.4 are presented as an effort to close the gap between AI experiments and production. The article frames this as a bet on AgentOps.

That matters because enterprise AI initiatives often stall between prototype and deployment. If organizations need customization, secure runtimes and better API governance, they also need operations practices that can manage those systems once they are live.

The source excerpt does not provide implementation specifics, but it does make the high-level takeaway explicit: production AI requires more than model experimentation.

The FinOps shift: from cloud bills to token economics

Another major operational change is financial. The New Stack reports that the new FinOps problem is not traditional cloud billing. Instead, the focus is shifting toward AI token economics.

Even with limited article text, the framing is notable. It implies that AI cost management introduces a different optimization problem from standard infrastructure spending. In other words, enterprises are not just scaling compute — they are managing usage patterns tied to prompts, models and agent behavior.

For teams building AI systems, that means cost awareness has to move closer to application behavior, not stay isolated in infrastructure dashboards.

Fresh data remains a practical bottleneck

AI systems also need current data, and that creates integration pressure. One article highlights how teams can spend months building web scrapers when a dedicated API product promises to replace that effort with a single API call.

The practical point is less about any one vendor than about the recurring challenge: keeping AI systems supplied with fresh external information is often messy and time-consuming. For enterprises, this reinforces the larger theme that data access and integration design are core parts of AI architecture.

The human risk: developers who cannot debug AI-generated code

Not every challenge is architectural or financial. The New Stack also warns that AI is creating a generation of developers who cannot debug their own code. The excerpt describes a situation where everything appears fine on the surface — tests pass, review looks clean — but the deeper engineering understanding may be missing.

This is an important counterweight to the enthusiasm around AI acceleration. If developers rely on AI output without building the ability to reason about failures, enterprises may gain short-term speed while increasing long-term fragility.

That concern fits with the rest of the coverage. Enterprise AI success is not only about models and platforms; it also depends on whether teams can operate, inspect and correct what those systems produce.

A more realistic picture of enterprise AI

Across these articles, enterprise AI looks less like a single product choice and more like a stack of organizational capabilities:

- Customization instead of generic deployment.

- Secure runtimes and controls for AI agents.

- API maturity as a prerequisite for agent adoption.

- AgentOps to move from demos into production.

- FinOps models that account for token economics.

- Data pipelines that keep systems current.

- Developer skills that preserve debugging and engineering judgment.

That combination is a useful reality check. The hard part of enterprise AI is not just getting a model to work. It is building the surrounding systems, governance and habits that make AI useful, controllable and sustainable inside a real organization.

References & Credits

- Why enterprise AI needs customization — The New Stack

- The new FinOps problem isn’t cloud bills — The New Stack

- Jensen Huang and Bill McDermott bet on OpenShell to secure enterprise AI agents — The New Stack

- The API portal is the clearest signal of whether your company can handle AI agents — The New Stack

- AI is creating a generation of developers who can’t debug their own code — The New Stack

- Red Hat is betting on AgentOps to close the gap between AI experiments and production — The New Stack

- AI teams are spending months on web scrapers that SerpApi replaces with one API call — The New Stack