Google will invest as much as $40 billion in Anthropic

This follows a similar, but smaller, investment by Amazon just days ago.

Use the header search or filters below.

This follows a similar, but smaller, investment by Amazon just days ago.

Google plans up to $40B investment in Anthropic as AI rivals race to secure massive compute capacity, following the limited release of its powerful, cybersecurity-focused Mythos model.

At Google Cloud Next, we’re announcing a range of compute capabilities to enable your core general purpose and AI workloads for the agentic world with higher performance and lower costs. Why it matters: IT leaders and builders are faced with balancing compute investments and resources between agentic AI and the general purpose use cases, including the web servers, databases, and enterprise applica

AI is evolving from answering questions to reasoning and taking action. Companies who want to lead in today’s agentic era require computing infrastructure designed and optimized for these new requirements. Today at Google Cloud Next, we are introducing new AI infrastructure capabilities that help you innovate faster, deliver compelling user and customer experiences, and optimize for cost and energ

The era of agentic AI is accelerating from human- to machine-speed operations, while also creating profound stress on legacy technology infrastructure. This new reality pushes foundational systems to their limits: agents generate thousands of internal messages and complex queries, spawning more agents, all of which can rapidly overwhelm traditional networks and databases, and expose new security v

Today at Google Cloud Next , we’re announcing new capabilities in Google Distributed Cloud (GDC) that bring Gemini and our advanced AI stack to wherever your data is, so you don’t need to compromise between AI innovation and sovereignty. This will serve as a catalyst for a sovereign neocloud architecture. GDC brings Google Cloud to wherever you need it — in your own data center or at the edge. It

Editor’s note : This blog post outlines Google Cloud’s GPU AI/ML infrastructure reliability strategy, and will be updated with links to new community articles as they appear. As we enter the era of multi-trillion parameter models, computational power has transitioned from a utility to a mission-critical strategic asset. To meet relentless training demand, organizations are no longer just building

Anthropic bulked up its compute deal with Google and Broadcom as the company has seen its run-rate revenue surge to $30 billion.

At Google, we are committed to being transparent about the environmental impact of our AI infrastructure , publishing metrics on the lifetime emissions of our chips — from manufacturing to powering these chips in the data center. Today, we are updating these metrics for our seventh-generation TPU, Ironwood, which demonstrates an approximately 3.7x improvement in Compute Carbon Intensity (CCI) comp

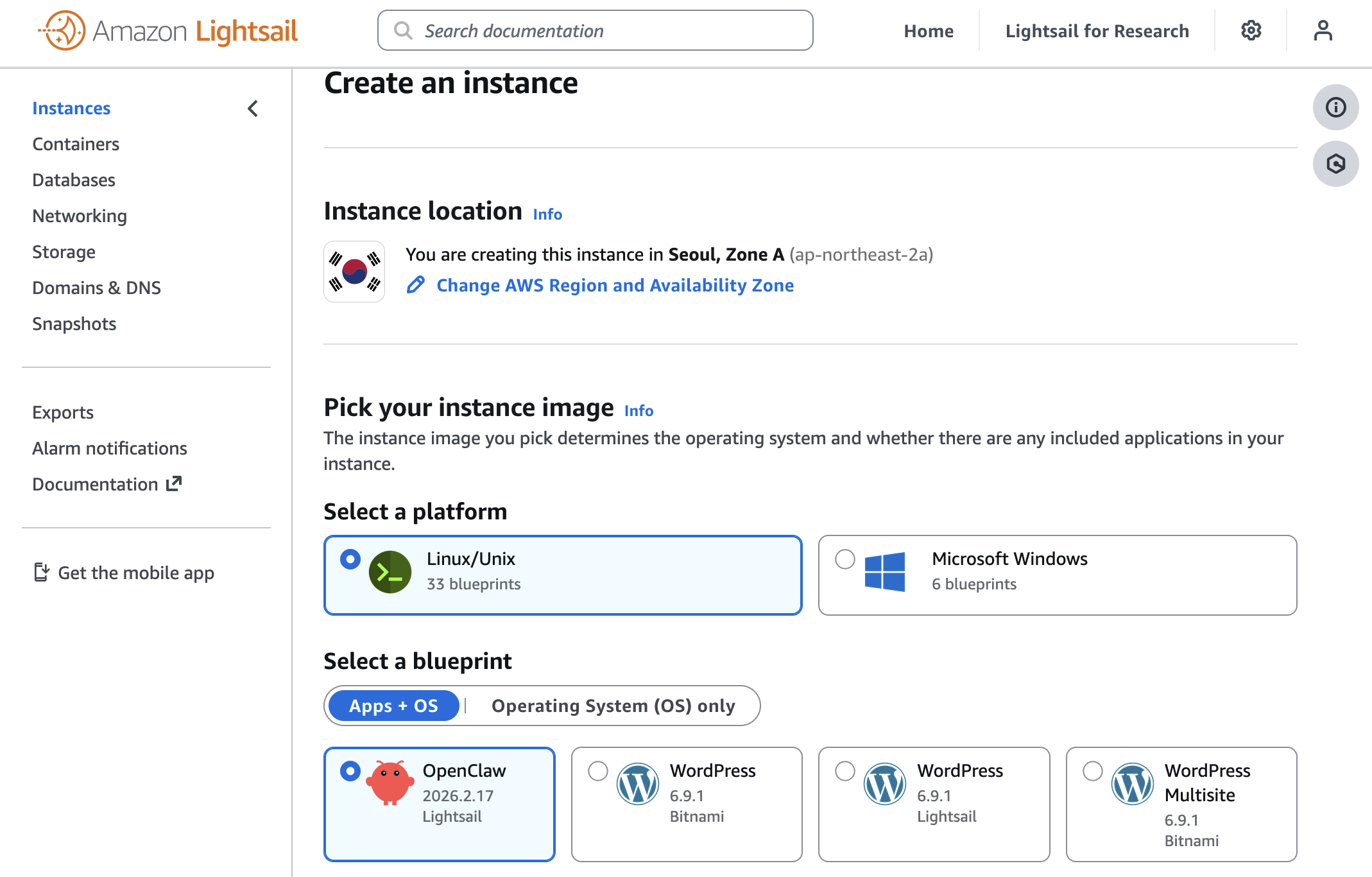

AWS launches OpenClaw on Amazon Lightsail to run OpenClaw instance, pairing your browser, enabling AI capabilities, and optionally connecting messaging channels. Your Lightsail OpenClaw instance is pre-configured with Amazon Bedrock for starting with your AI assistant immediately — no additional configuration required.

Amazon EC2 Hpc8a instances, powered by 5th Gen AMD EPYC processors, deliver up to 40% higher performance, increased memory bandwidth, and 300 Gbps Elastic Fabric Adapter networking, helping customers accelerate compute-intensive simulations, engineering workloads, and tightly coupled HPC applications.